Experiments in character consistency

Trying to keep Kabu consistent in Midjourney was harder than expected. Here’s what I learned about character workflows, omni-reference, and why ComfyUI might be the next step.

Over the past few weeks I’ve been experimenting pretty deeply with Midjourney and Wan 2.2, trying to solve one big problem: how do you get consistent characters across different images?

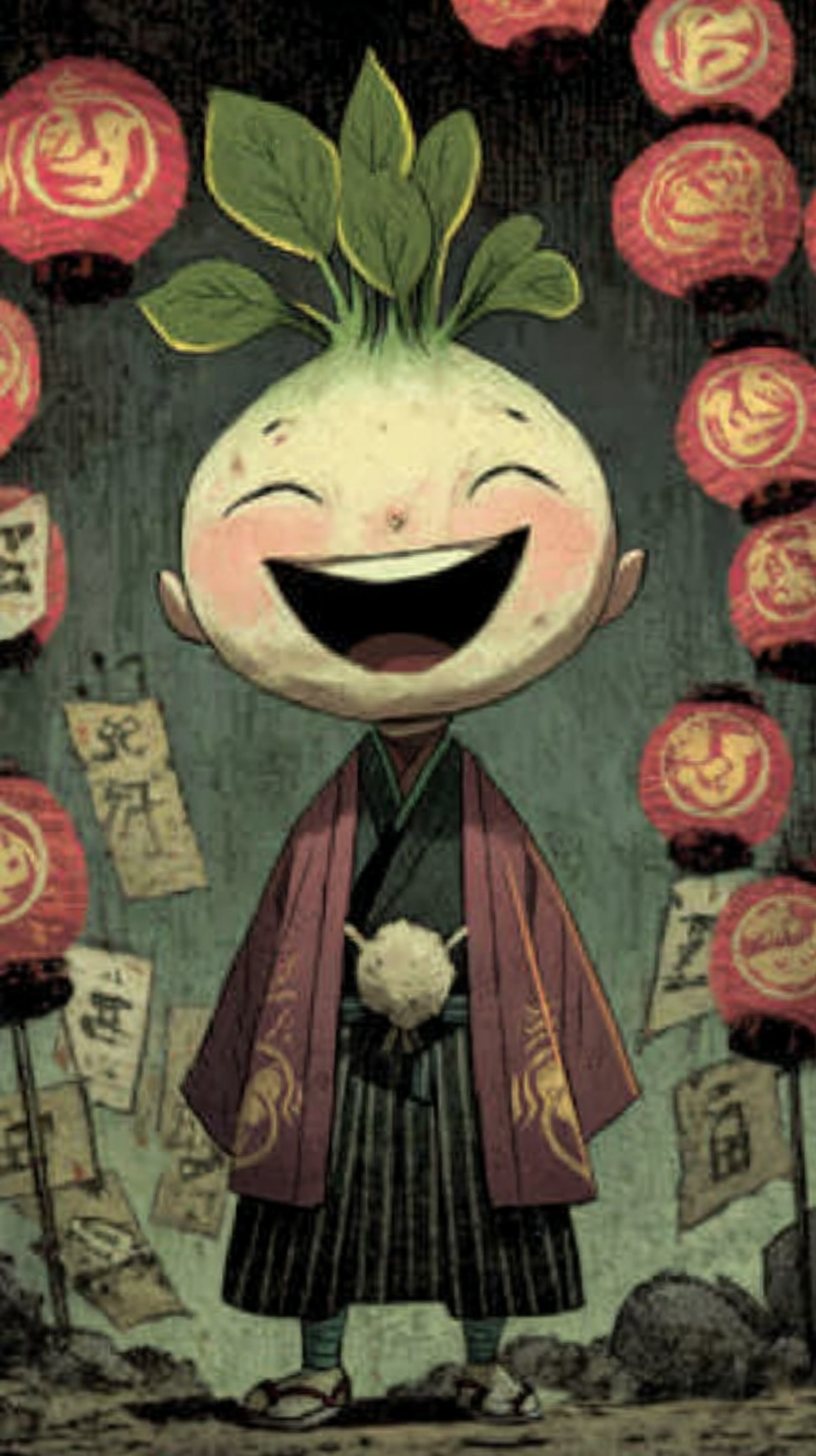

Omni-reference is useful, but only to a point. When I started working on Kabu, it was surprisingly difficult to keep him consistent. I assume that’s partly because there aren’t exactly a lot of anthropomorphic turnip-headed boys in Midjourney’s training data.

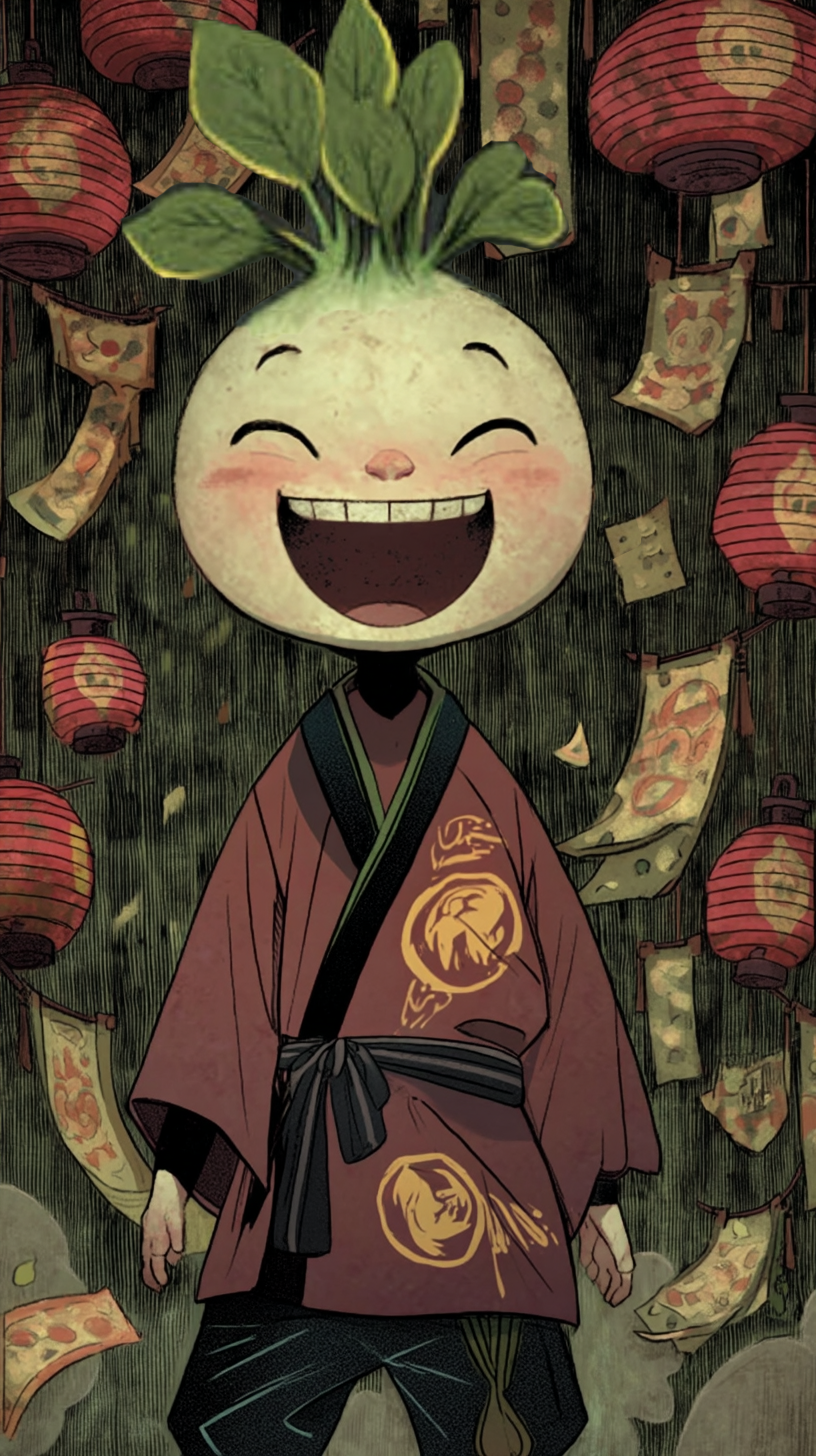

(Left Image) Prompt: An anthropomorphic turnip headed boy with a cute face, slashes through the air with his farmers scythe in a dynamic action pose. The boy is wearing period appropriate farmer's clothing. The background is a sunny day in feudal Japan. Bright vibrant warm colours. (Right Image) Style Ref

After a lot of trial and error, I found a workflow that works best for me:

- Generate a series of “training images” showing different character expressions.

- When I need Kabu to look sad, I’ll use the “sad Kabu” image reference as input.

- Then I dial omni-reference strength up or down depending on how much variety or accuracy I want.

- Often times, there will be 2-3 images that are close but not perfect, so I'll piece them together in photoshop to create a new reference image that I can use in the next Midjourney splot-machine spin

This has been the most reliable way to keep him “on model.”

(Top Left) Photoshopped final image, ready for image to video generation. Made by combining various Midjourney outputs (Bottom Left) Photoshopped final image of "Happy Kabu", made by combining the Bottom Middle + Bottom Right images

Different "training images" of Kabu for character reference. It's important to try to get different poses and perspectives

From Midjourney to ComfyUI

It wasn’t until I watched a tutorial by Niko Pueringer (Corridor Digital) on training Stable Diffusion models that things really clicked. (It’s behind a paywall, but I recommend subscribing - there’s a ton of great VFX content and tutorials.)

Even though the video is a couple of years old, the core idea stuck with me: what I was doing in Midjourney was basically a pseudo version of proper model training.

That’s how I discovered ComfyUI and LoRA training. From what I understand, LoRA lets you train individual models for specific characters or art styles—so instead of hacking consistency together with references, you’re actually teaching the model who each character is.

I’ll keep sharing updates as I start learning ComfyUI and LoRA training. For now,

I’ll leave you with a little gallery of my “failed Kabu experiments” (some of them are hilarious... so many images of Kabu without eyes - why?)